ELK란?

What is ELK?

ELK 는, Elastic search, Logstash, Kibana의 세가지 오픈소스 프로젝트의 약자이다

각 프로젝트가 연동되어 데이터 수집 및 분석 툴로 사용 할 수 있다

ELK is an acronym for three open-source projects: Elasticsearch, Logstash, and Kibana.

These projects work together and can be used as a data collection and analysis tool.

프로젝트 다운로드(선택)

Download the Project (Optional)

$ git clone https://github.com/paullee714/ELK-docker-python.git

프로젝트 구조

Project Structure

ELK-docker-python

├── README.md

├── docker-elk

│ ├── LICENSE

│ ├── README.md

│ ├── docker-compose.yml

│ ├── docker-stack.yml

│ ├── elasticsearch

│ ├── extensions

│ ├── kibana

│ └── logstash

├── elk-flask

│ ├── __pycache__

│ ├── app.py

│ ├── elk_lib

│ └── route

├── requirements.txt

└── venv

├── bin

├── lib

└── pyvenv.cfg

ELK 설정하기 - Docker

프로젝트 폴더 안에 있는 docker-elk 디렉터리로 들어가 docker-compose를 실행시켜준다

Setting Up ELK - Docker

Navigate to the docker-elk directory inside the project folder and run docker-compose.

$ cd docker-elk

$ docker-compose build && docker-compose up -d

실행결과

Execution Result

➜ docker-elk git:(develop) docker-compose build && docker-compose up -d

Building elasticsearch

Step 1/2 : ARG ELK_VERSION

Step 2/2 : FROM docker.elastic.co/elasticsearch/elasticsearch:${ELK_VERSION}

---> f29a1ee41030

Successfully built f29a1ee41030

Successfully tagged docker-elk_elasticsearch:latest

Building logstash

Step 1/2 : ARG ELK_VERSION

Step 2/2 : FROM docker.elastic.co/logstash/logstash:${ELK_VERSION}

---> fa5b3b1e9757

Successfully built fa5b3b1e9757

Successfully tagged docker-elk_logstash:latest

Building kibana

Step 1/2 : ARG ELK_VERSION

Step 2/2 : FROM docker.elastic.co/kibana/kibana:${ELK_VERSION}

---> f70986bc5191

Successfully built f70986bc5191

Successfully tagged docker-elk_kibana:latest

Starting docker-elk_elasticsearch_1 ... done

Starting docker-elk_kibana_1 ... done

Starting docker-elk_logstash_1 ... done

docker ps로 확인 해 주자

Let's verify with docker ps.

➜ docker-elk git:(develop) docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

ae318f58a9af docker-elk_logstash "/usr/local/bin/dock…" 2 days ago Up 47 seconds 0.0.0.0:5000->5000/tcp, 0.0.0.0:9600->9600/tcp, 0.0.0.0:5000->5000/udp, 5044/tcp docker-elk_logstash_1

00a032b5c5c4 docker-elk_kibana "/usr/local/bin/dumb…" 2 days ago Up 47 seconds 0.0.0.0:5601->5601/tcp docker-elk_kibana_1

3b62a3ba2e21 docker-elk_elasticsearch "/usr/local/bin/dock…" 2 days ago Up 47 seconds 0.0.0.0:9200->9200/tcp, 0.0.0.0:9300->9300/tcp docker-elk_elasticsearch_1

ELK port 설정

docker ps 로 확인 해 보았을 때 각각의 port는 다음과 같다

ELK Port Configuration

When checking with docker ps, the ports for each service are as follows:

Elastic Search : 9200, 9300 Logstash : 5000, 9600 Kibana : 5601

docker-compose.yml파일과 각 config파일을 확인해보자

아래의 docker-compose.yml 파일을 보면, 각각 service들의 config파일들의 설정을 가져와서 설정한다.

Let's check the docker-compose.yml file and each config file.

Looking at the docker-compose.yml file below, each service retrieves and applies settings from their respective config files.

Elastic Search : /elasticsearch/config/elasticsearch.yml Logstash : /logstash/config/logstash.yml Kibana : /kibana/config/kibana.yml

-

docker-elk docker-compose.yml file# /ELK-docker-python/docker-elk/docker-compose.yml

version: '3.2'

services:

elasticsearch:

build:

context: elasticsearch/

args:

ELK_VERSION: $ELK_VERSION

volumes:

- type: bind

source: ./elasticsearch/config/elasticsearch.yml

target: /usr/share/elasticsearch/config/elasticsearch.yml

read_only: true

- type: volume

source: elasticsearch

target: /usr/share/elasticsearch/data

ports:

- "9200:9200"

- "9300:9300"

environment:

ES_JAVA_OPTS: "-Xmx256m -Xms256m"

ELASTIC_PASSWORD: changeme

# Use single node discovery in order to disable production mode and avoid bootstrap checks

# see https://www.elastic.co/guide/en/elasticsearch/reference/current/bootstrap-checks.html

discovery.type: single-node

networks:

- elk

logstash:

build:

context: logstash/

args:

ELK_VERSION: $ELK_VERSION

volumes:

- type: bind

source: ./logstash/config/logstash.yml

target: /usr/share/logstash/config/logstash.yml

read_only: true

- type: bind

source: ./logstash/pipeline

target: /usr/share/logstash/pipeline

read_only: true

ports:

- "5000:5000/tcp"

- "5000:5000/udp"

- "9600:9600"

environment:

LS_JAVA_OPTS: "-Xmx256m -Xms256m"

networks:

- elk

depends_on:

- elasticsearch

kibana:

build:

context: kibana/

args:

ELK_VERSION: $ELK_VERSION

volumes:

- type: bind

source: ./kibana/config/kibana.yml

target: /usr/share/kibana/config/kibana.yml

read_only: true

ports:

- "5601:5601"

networks:

- elk

depends_on:

- elasticsearch

networks:

elk:

driver: bridge

volumes:

elasticsearch:

Logstash 의 로깅설정

flask의 로그를 받는 것은 ELK스택 중, Logstash 이다.

Logstash —> Elastic Search —> Kibana(조회/분석)

그렇기 때문에 Logstash의 설정이 중요하다.

ES의 인덱스를 'elk-logger' 로 설정하고 로그를 모아 봅시다

Logstash Logging Configuration

In the ELK stack, Logstash is responsible for receiving Flask logs.

Logstash —> Elastic Search —> Kibana (query/analysis)

Therefore, configuring Logstash is crucial.

Let's set the ES index to 'elk-logger' and collect the logs.

$ vim /ELK-docker-python/docker-elk/logstash/pipeline/logstash.conf

input {

tcp {

port => 5000

}

}

## Add your filters / logstash plugins configuration here

output {

elasticsearch {

hosts => "elasticsearch:9200"

user => "elastic"

password => "changeme"

index => "elk-logger"

}

}

간단한 flask 앱 만들기

Creating a Simple Flask App

-

requirements.txt

certifi==2020.4.5.1

click==7.1.2

elasticsearch==7.7.1

Flask==1.1.2

itsdangerous==1.1.0

Jinja2==2.11.2

MarkupSafe==1.1.1

python-dotenv==0.13.0

python-json-logger==0.1.11

python-logstash==0.4.6

python3-logstash==0.4.80

urllib3==1.25.9

Werkzeug==1.0.1

requirements.txt를 남겨두었다. 가상환경에서 패키지를 설치하면 flask및 여러 기타 모듈들을 사용 할 수 있다.

I've included the requirements.txt file. Once you install the packages in a virtual environment, you can use Flask and various other modules.

flask logger 설정

Flask Logger Configuration

import logging, logstash

log_format = logging.Formatter('\n[%(levelname)s|%(name)s|%(filename)s:%(lineno)s] %(asctime)s > %(message)s')

def create_logger(logger_name):

logger = logging.getLogger(logger_name)

if len(logger.handlers) > 0:

return logger # Logger already exists

logger.setLevel(logging.INFO)

**logger.addHandler(logstash.TCPLogstashHandler('localhost', 5000, version=1))**

return logger

logger를 설정 하는 부분 중, addHandler(logstash.TCPLogstashHandler('localhost',5000,version=1)) 이 logstash로 해당하는 로그를 보내겠다 라는 얘기이다.

In the logger configuration section, addHandler(logstash.TCPLogstashHandler('localhost',5000,version=1)) means that the logs will be sent to Logstash.

@elk_test.route('/', methods=['GET'])

def elk_test_show():

logger = elk_logger.create_logger('elk-test-logger')

logger.info('hello elk-test-logstash')

return "hello world!"

로깅을 남기는 메서드를 만들어 로그를 전달하면 된다.

Simply create a method that performs logging to send the logs.

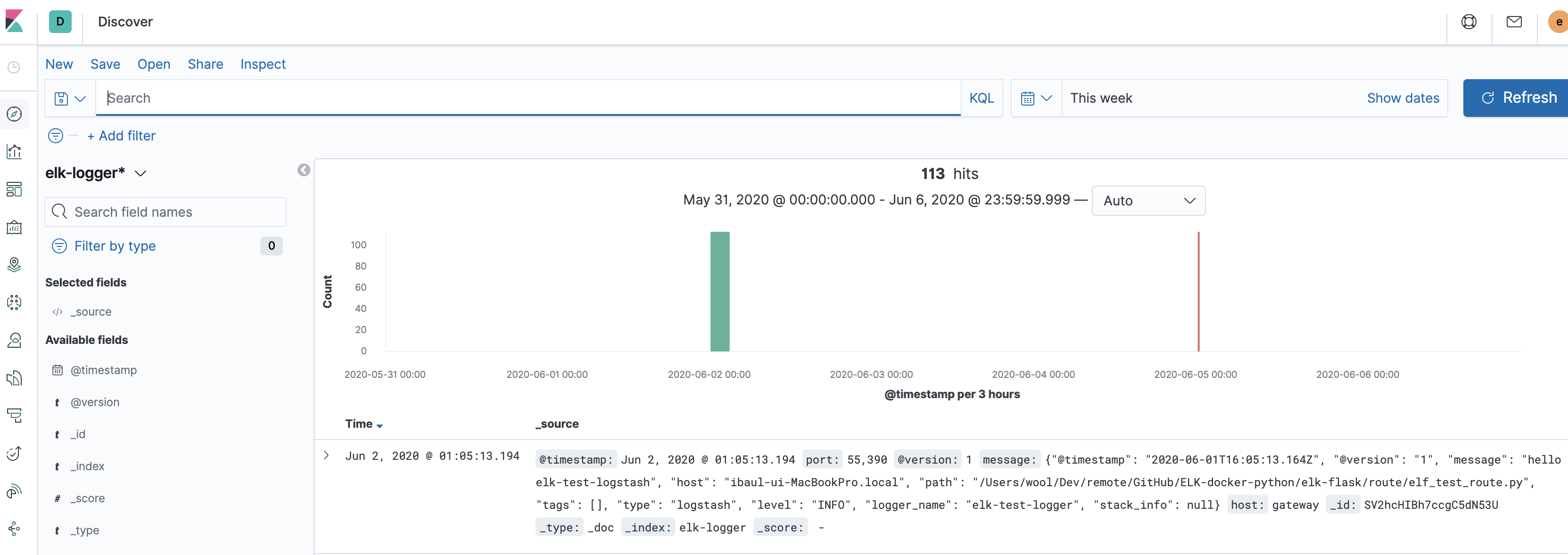

kibana에서 확인하기

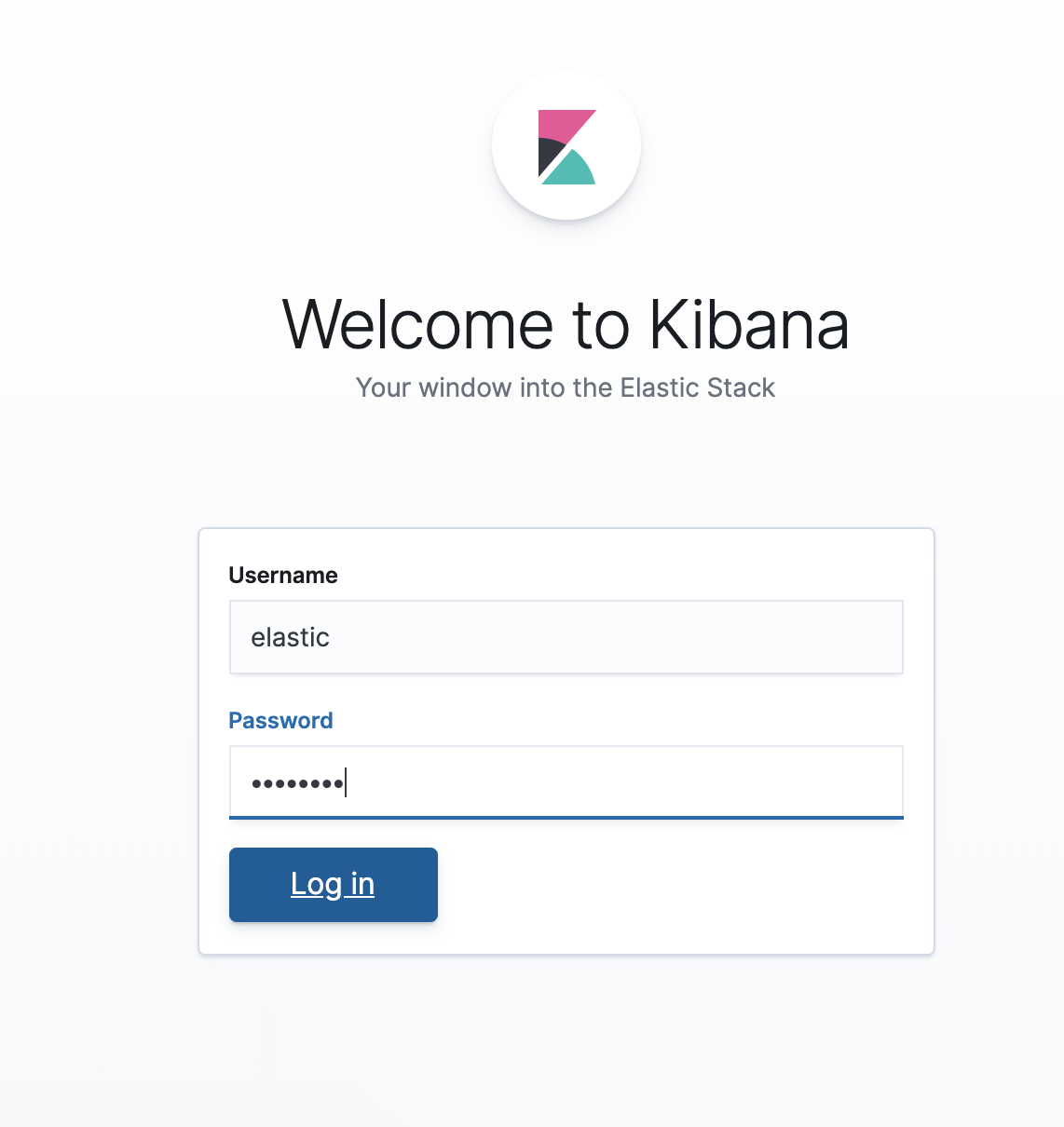

각각의 config파일에서 id,pw를 설정 한 대로 로그인 하면 된다.

설정파일을 따로 바꾸지 않았다면, id는 elastic, pw는 changeme 이다

Verifying in Kibana

Log in using the id and password configured in each config file.

If you haven't changed the configuration file, the id is elastic and the password is changeme.

로그인 후, index pattern과 데이터가 잘 들어가 있는 것을 확인 할 수 있다.

After logging in, you can verify that the index pattern and data have been properly stored.

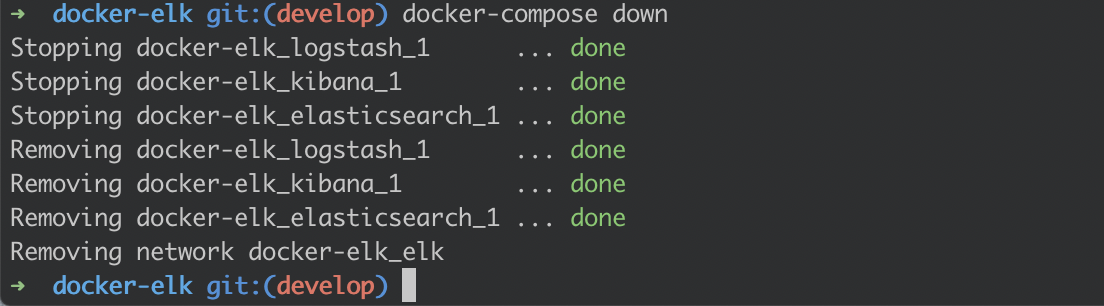

$ docker-compose down

위의 명령어로 프로세스를 종료 해줍니다 :D

Use the command above to shut down the processes.